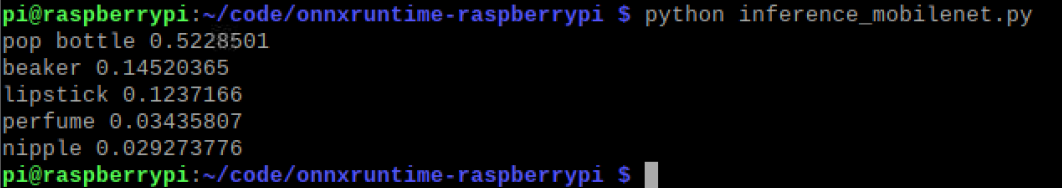

ONNXRuntime inference works well on Raspberry Pi 4 with Intel NCS2: step by step setup with OpenVINO Execution Provider - PUT Vision Lab

GitHub - nknytk/built-onnxruntime-for-raspberrypi-linux: Built python wheel files of https://github.com/microsoft/onnxruntime for raspberry pi 32bit linux.

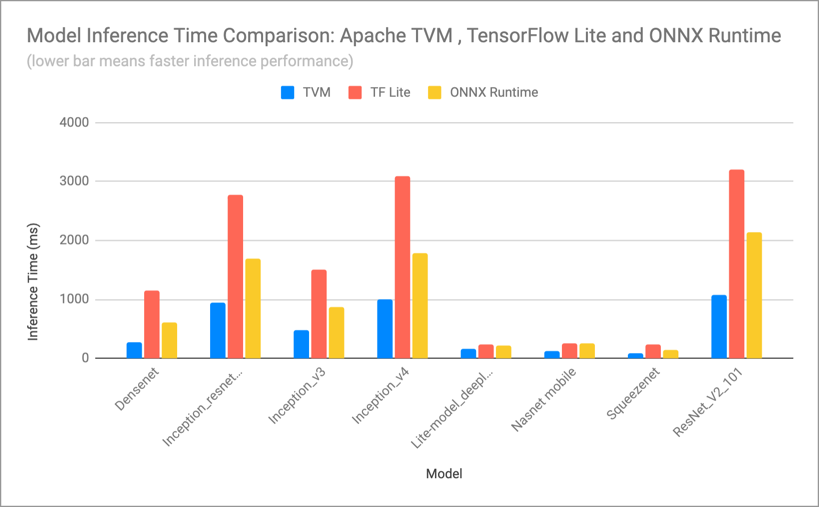

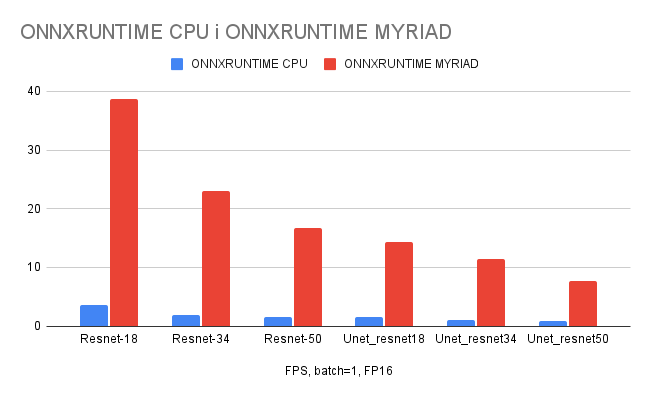

Performance analysis for different embedded platforms; FPGA, JX GPU, JX... | Download Scientific Diagram